AI Content Detection Tools Emerge Amid Ethical Concerns

Recent developments in AI content detection tools aim to differentiate human-written texts from those generated by algorithms like ChatGPT-5. As the quality of generated content improves, these tools leverage advanced techniques to calculate the likelihood of AI authorship based on linguistic patterns, raising concerns about online credibility and search engine optimization (SEO). The emergence of such detection mechanisms highlights a growing need for transparency as AI-generated text proliferates in academic and editorial spaces.

The strategic implications are significant: the fidelity of digital information is under threat, potentially impacting SEO rankings as search engines favor authentic content. Educators and journalists face ethical dilemmas regarding integrity and trust, as undetected AI writing could undermine academic standards and public confidence. Developing these detection tools not only addresses immediate concerns but also underscores a pivotal moment in how we engage with AI-generated material, signaling a need for a balance between technology and human oversight.

Related Sovereign AI Articles

AI Evaluation Costs Surge as Compute Bottleneck Emerges

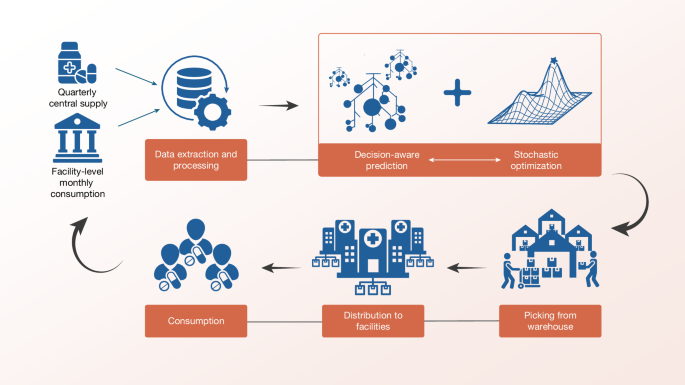

Sierra Leone Deploys Decision-Aware ML for Medicine Access

OpenAI Highlights Math as Pathway to AGI Progress

IBM Advances LLMs with Granite 4.1 Release