IBM Advances LLMs with Granite 4.1 Release

IBM has unveiled Granite 4.1, a series of dense, decoder-only large language models with parameter sizes of 3B, 8B, and 30B, which were trained on approximately 15 trillion tokens. This sophisticated training process employs a five-phase pipeline that enhances language understanding through curated data, notably expanding its context window up to 512K tokens. The architectural changes significantly improve performance, particularly in the 8B instruct model, which outperforms its predecessor despite fewer parameters. All models are open-source under the Apache 2.0 license, promoting wider accessibility.

The introduction of Granite 4.1 represents a strategic shift in AI model training, emphasizing refined data curation and reinforcement learning. This approach could bolster national capabilities in AI by enhancing understanding in coding and mathematical reasoning, positioning domestic solutions as more competitive against foreign offerings. By investing in advanced training methodologies, IBM is not only contributing to technical advancements but also potentially reducing reliance on external AI infrastructures, supporting broader sovereignty in AI development.

Related Sovereign AI Articles

AI Evaluation Costs Surge as Compute Bottleneck Emerges

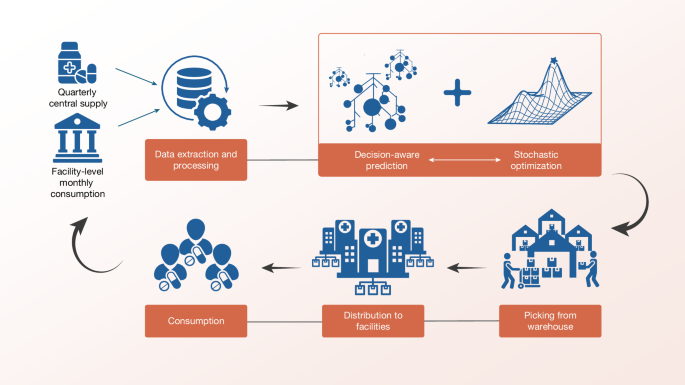

Sierra Leone Deploys Decision-Aware ML for Medicine Access

AI Chatbots' Warmth Reduces Trustworthiness and Accuracy

Research Reveals AI Evasion Tactics in Controllability