LLMs Reduce De-Anonymization Costs by 90%

Recent research highlights that large language models (LLMs) have drastically reduced the cost of de-anonymizing users online, transforming the cybersecurity landscape. A study by Simon Lermen and Daniel Paleka reveals that LLMs can accurately correlate fragmented data to identify anonymous accounts, making traditional protective methods ineffective. This development poses new challenges, especially for security leaders who face an increased risk of sophisticated spear-phishing attacks due to this enhanced capability of LLMs.

As LLMs make de-anonymization more accessible and cost-effective, the implications for data privacy are significant. The automation of data correlation can also threaten the integrity of sensitive databases, such as health records. Experts warn that the vulnerabilities associated with anonymized data could lead to severe privacy violations. Therefore, organizations must not only enhance technical defenses but also promote stricter user practices to mitigate sharing sensitive information across platforms, as this remains a primary vulnerability in the digital age.

Free Daily Briefing

Top AI intelligence stories delivered each morning.

Related Articles

Unions Partner with Tech Giants Over AI Data Center Projects

Munify Raises $3 Million for Cross-Border Neobank Development

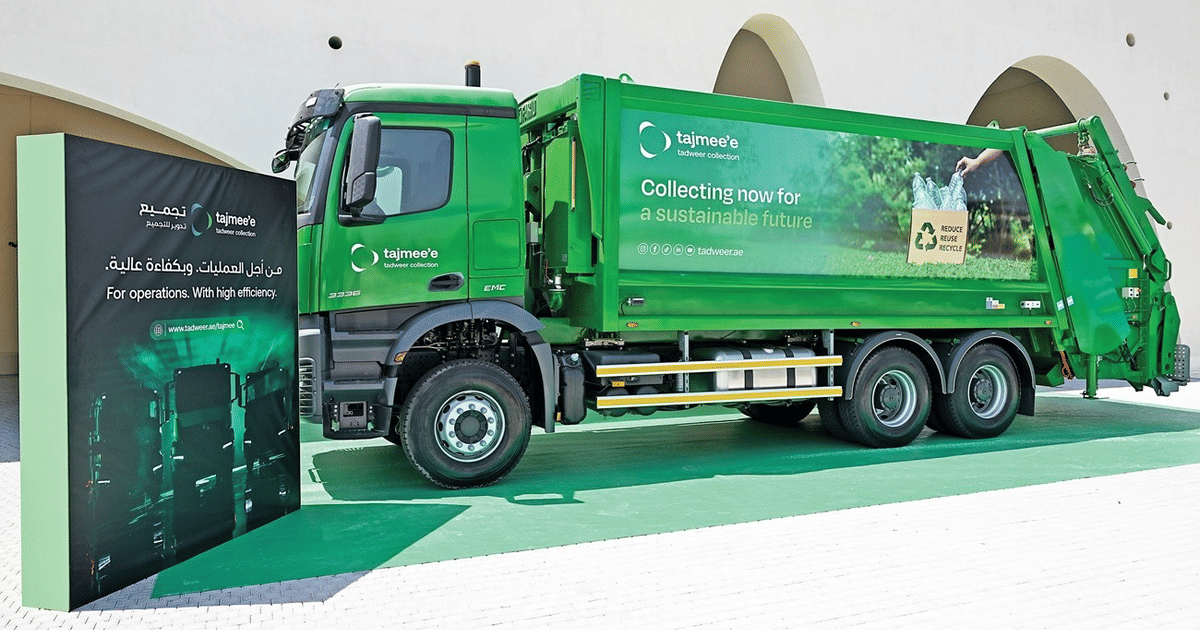

Abu Dhabi Deploys AI Fleet Cutting Emissions by 40%

UK Cybersecurity Agency Warns of AI-Driven Vulnerability Surge