Zhang Paper Challenges Deep Learning Theories

In 2016, Zhang et al.'s paper "Understanding deep learning requires rethinking generalization" raised significant questions about classical deep learning theories. The authors observed that traditional statistical learning metrics like VC dimension and Rademacher complexity inadequately explained the performance of deep learning models, which often outperform expectations despite being deemed overly complicated. This paper has been credited with destabilizing established perceptions in deep learning theory, suggesting a need for new frameworks to understand the generalization capabilities of modern neural networks.

The implications of this work are far-reaching, as they not only challenge entrenched beliefs within the AI community but also highlight the necessity for innovative approaches to evaluating neural network performance. As researchers grapple with redefined complexity measures, this could signal a shift in both academic inquiry and practical applications in AI, moving away from conventional methodologies towards more adaptive strategies. The evolution of deep learning research may unlock new patterns of thought that further enrich AI capabilities in various domains.

Related Sovereign AI Articles

AI Evaluation Costs Surge as Compute Bottleneck Emerges

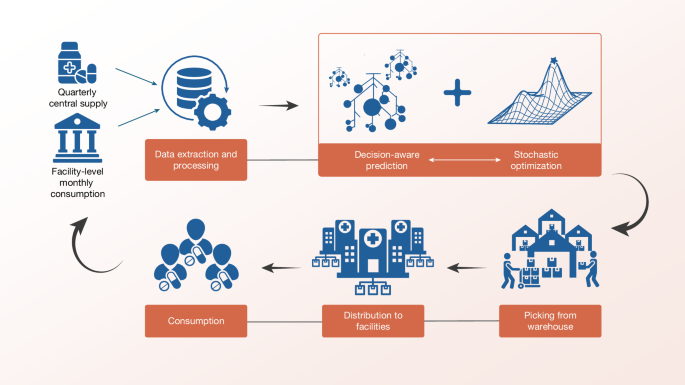

Sierra Leone Deploys Decision-Aware ML for Medicine Access

OpenAI Highlights Math as Pathway to AGI Progress

IBM Advances LLMs with Granite 4.1 Release