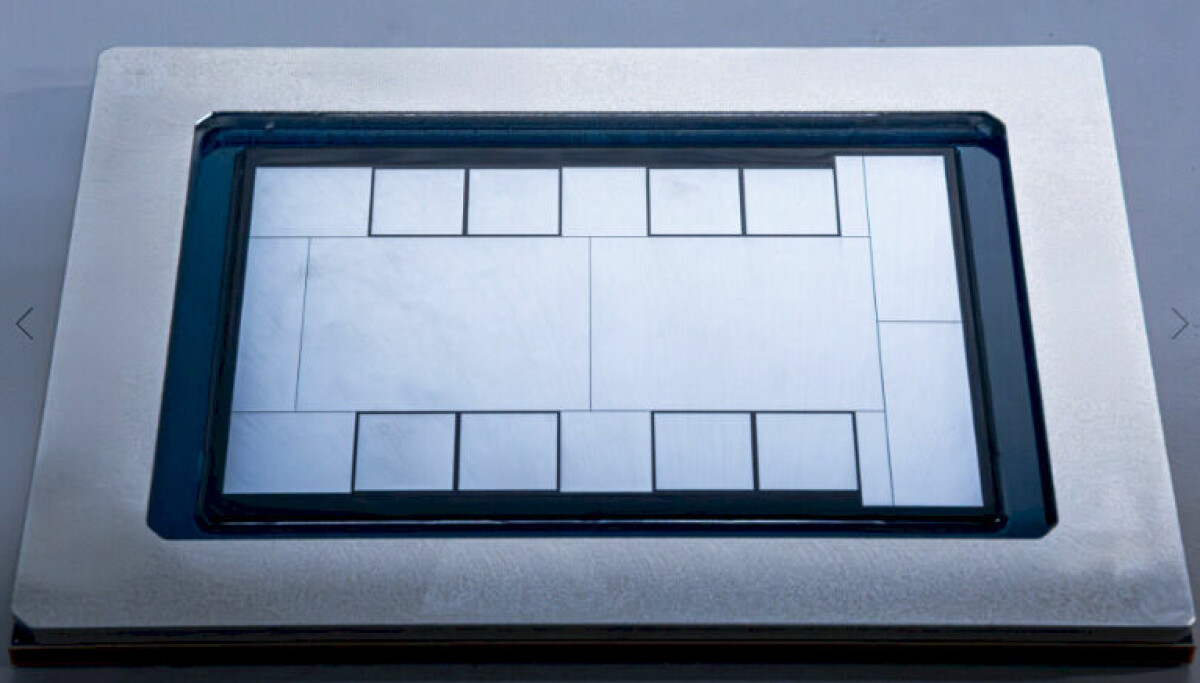

Meta Develops MTIA Compute Engine for AI Efficiency

Meta Platforms is strategically advancing its MTIA compute engine, aiming to optimize AI performance in handling large datasets. The company's architecture focuses on a split approach using GPUs with high bandwidth memory and CPUs for data management, enabling the effective running of Deep Learning Recommendation Models (DLRMs). This differentiated model accommodates the vast requirements of billions of users interacting on their platforms, addressing challenges in data memory and processing power.

The implications of Meta's MTIA development underline a significant reshaping of AI compute strategies, particularly emphasizing the need for robust, multi-faceted architectures. However, this approach reveals Meta's reliance on Nvidia's advanced GPUs, which could increase dependency on foreign technology. As AI models evolve rapidly, Meta must actively adjust its infrastructure amidst external computational pressures, signaling potential challenges in attaining independent AI capabilities.