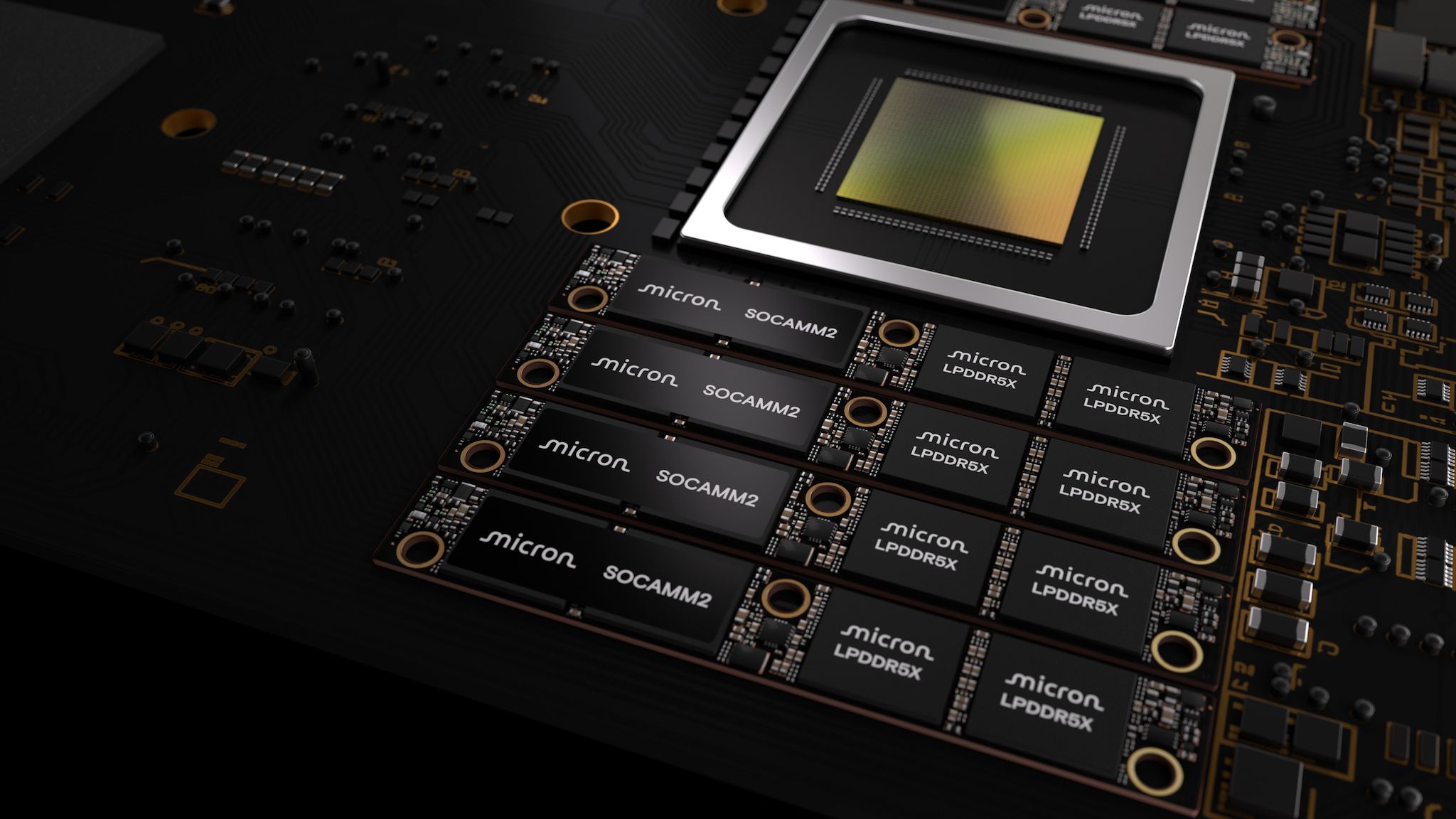

Micron Unveils 256GB SOCAMM2 Memory for AI Datacenters

Micron has introduced the first 256GB SOCAMM2 memory packages aimed at enhancing AI data center capabilities. This development allows for up to 2TB of memory to be connected per CPU, significantly increasing memory density by 33% compared to its predecessor, the 192GB module. With the adoption of 32Gb LPDDR5X monolithic dies, these new memory units not only offer increased capacity but also deliver 66% greater power efficiency and compatibility with liquid cooling systems essential for high-performance AI servers.

The strategic implications of this innovation are substantial, as the ability to utilize larger memory contexts accelerates AI processing capabilities, reducing Time To First Token (TTFT) significantly. In an age where memory is critical to AI performance, Micron's advancements support the growing demand for efficient and powerful computing infrastructure in AI applications, positioning organizations to invest heavily in such technology in their quest for AI dominance while potentially lessening dependency on foreign suppliers for high-capacity memory solutions.