China Advances AI Safety Governance Research Report

Key Points

- 1CAICT releases 2025 AI safety governance report with key findings.

- 2Report identifies new security risks and governance challenges.

- 3China aims for greater AI autonomy in governance standards.

The China Academy of Information and Communications Technology (CAICT) has published its 2025 AI Safety and Security Governance Report, favored overwhelmingly in a recent vote. The report details emerging security risks in AI, including vulnerabilities highlighted by international research organizations such as the CISPA Helmholtz Center for Information Security and the Open Worldwide Application Security Project. It underscores the importance of staying vigilant as AI capabilities progress, presenting unknown risks related to value alignment, with references from the Stanford AI Index Report, showcasing concurrency with international benchmarks.

As AI technologies evolve, the implications of this report are significant. It not only reflects China's growing involvement in establishing AI governance frameworks but also indicates a strategic shift towards minimizing reliance on foreign tech standards and promoting national governance. By addressing AI safety and the potential misuse of open-source tools, CAICT's research may catalyze developments that enhance China's self-sufficiency in AI while contributing to the global dialogue on AI governance standards.

Free Daily Briefing

Top AI intelligence stories delivered each morning.

Related Articles

Unions Partner with Tech Giants Over AI Data Center Projects

Munify Raises $3 Million for Cross-Border Neobank Development

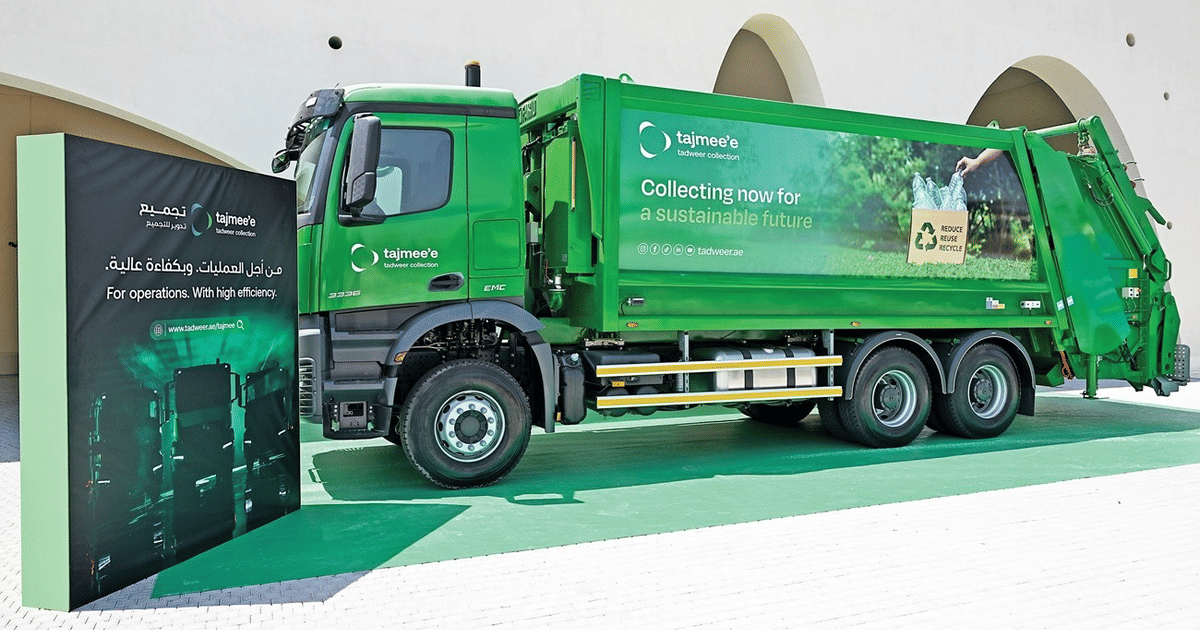

Abu Dhabi Deploys AI Fleet Cutting Emissions by 40%

UK Cybersecurity Agency Warns of AI-Driven Vulnerability Surge