AWS Partners with Cerebras for Enhanced AI Inference

Key Points

- 1Cerebras CS-3 and Trainium optimize AI inference processes.

- 2Reduced latency achieved through parallel prefill and serial decode.

- 3Collaboration enhances AI capabilities but may increase reliance on cloud services.

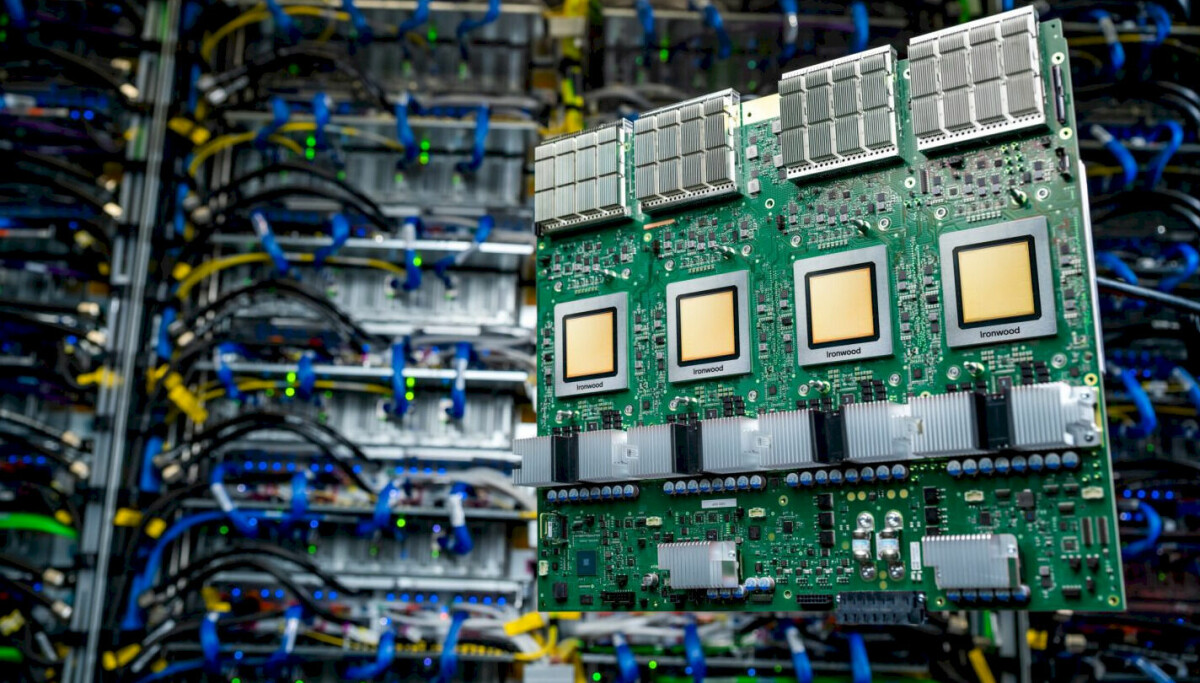

AWS and Cerebras have announced a partnership to improve AI inference performance. By utilizing Cerebras' CS-3 technology along with AWS's Trainium processors, this setup allows for a novel approach to AI processing that splits inference into parallel tasks for prefill and a serial decode to significantly reduce latency during operations. This collaboration aims to deliver highly efficient AI computation for various industry applications.

The implications of this enhanced AI capability suggest a shift towards more responsive AI systems, which could influence how organizations leverage cloud-based AI services. However, as AWS continues to expand its AI infrastructure, there is a growing concern regarding dependence on external platforms for critical AI processing capabilities. This partnership not only underscores advances in technology but may also raise questions about data sovereignty and the future of domestic AI autonomy.

Free Daily Briefing

Top AI intelligence stories delivered each morning.

Related Articles

Apple Price Hike Reflects AI Demand Impact on Mac Mini

Google Cloud Utilizes GenAI for Rapid Growth in Cloud Market

Meta Acquires Startup to Boost Humanoid Robotics Initiative

Delta CEO Advocates for Augmented Intelligence Over AI