Transformers Architecture Affects Observability and Error-Cr

Key Takeaways

- 1New insights into transformer model architecture and observability.

- 2Findings impact error detection in AI models.

- 3Architecture choices influence monitoring effectiveness during training.

Recent research from arXiv highlights that autoregressive transformers can make confident errors, but these can only be detected if the model retains internal signals that are not revealed by output confidence alone. This research identifies the significance of architecture and training methods on the observability of these models, emphasizing that observability is inherently linked to the linear readability of decision quality from mid-layer activations. The study demonstrates that the architecture can significantly impact the signal preservation, revealing notable performance variances across different variants and configurations.

The implications of this research are substantial for developers and policymakers in AI. The findings suggest that careful architecture selection in transformer models is critical not only for performance but also for effective error detection mechanisms. As models become more complex and widely deployed in critical applications, understanding the relationship between architecture and observability will be essential for improving reliability and trust in AI systems. This research could influence future AI architecture designs and training practices, thus contributing to the advancement of robust AI technologies and better governance frameworks.

Related Sovereign AI Articles

AI Evaluation Costs Surge as Compute Bottleneck Emerges

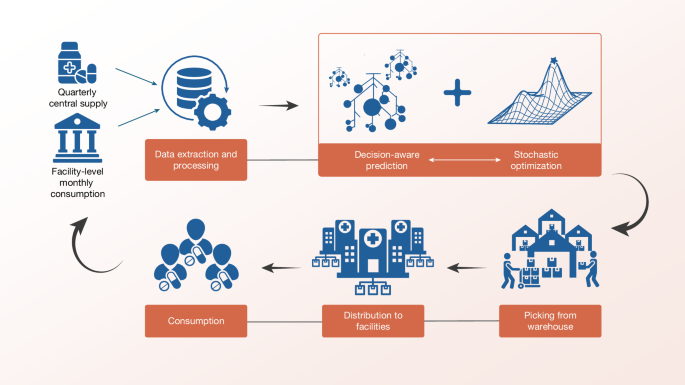

Sierra Leone Deploys Decision-Aware ML for Medicine Access

OpenAI Highlights Math as Pathway to AGI Progress

IBM Advances LLMs with Granite 4.1 Release