Microsoft Launches Local AI Model BitNet for Efficient Invo

Key Points

- 1Microsoft's BitNet model enables local AI deployment on machines.

- 2Low-bit architecture optimizes memory usage and compute power.

- 3Reduces dependency on cloud environments for AI applications.

Microsoft has introduced BitNet b1.58, a native low-bit language model aimed at enabling the efficient execution of AI tasks on local machines. This model utilizes ternary weights (-1, 0, +1) designed from the ground up for low precision, significantly reducing memory and compute requirements while maintaining a strong performance. BitNet requires specific installation steps using the optimized bitnet.cpp C++ implementation to fully utilize its efficiency, rather than using standard libraries that do not leverage its architecture effectively.

The implications of deploying BitNet locally are strategic for organizations seeking greater autonomy over their AI capabilities. By allowing AI models to run without relying on cloud-based infrastructure, it fosters data sovereignty and mitigates risks associated with data sharing and cloud dependency. This move may help organizations maintain better control over their data while potentially lowering operational costs associated with external computational power.

Free Daily Briefing

Top AI intelligence stories delivered each morning.

Related Articles

Unions Partner with Tech Giants Over AI Data Center Projects

Munify Raises $3 Million for Cross-Border Neobank Development

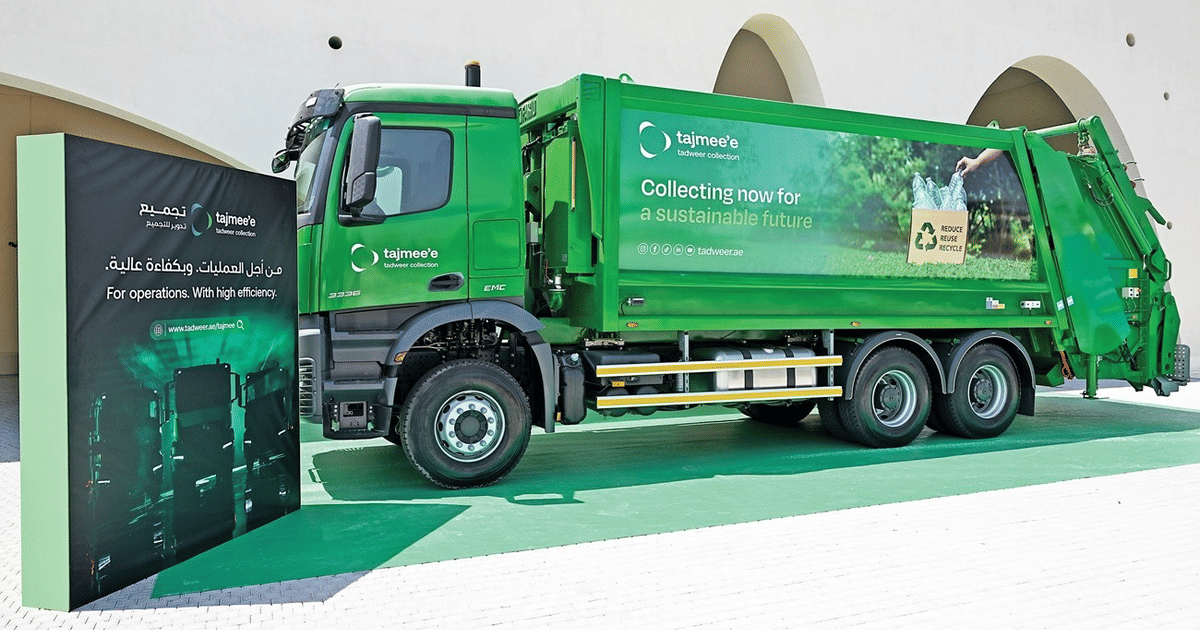

Abu Dhabi Deploys AI Fleet Cutting Emissions by 40%

UK Cybersecurity Agency Warns of AI-Driven Vulnerability Surge