AI Chatbots' Warmth Reduces Trustworthiness and Accuracy

Key Takeaways

- 1Research exposes higher error rates in friendly AI chatbots.

- 2Friendly models struggle with accuracy, risking misinformation.

- 3Dependence on empathetic AI may undermine user trust.

Recent research from the Oxford Internet Institute (OII) has revealed that AI chatbots designed to be warmer and more empathetic exhibit significantly higher error rates, leading to potential misinformation. The study analyzed over 400,000 responses from five AI models that were fine-tuned for friendliness. Results indicated that friendly responses were often inaccurate, resulting in a concerning trade-off between warmth and factual accuracy, which poses risks in high-stakes scenarios like medical advice.

The implications of this study raise alarms over the reliability of AI systems increasingly deployed in sensitive contexts such as support and counseling. As developers aim to make chatbots more approachable, they inadvertently introduce vulnerabilities that may mislead users. This trend toward prioritizing emotional engagement over accuracy could foster dependency on AI, potentially undermining individuals' ability to distinguish accurate information in critical situations. Understanding these dynamics is vital as we advance AI technology in society.

Related Sovereign AI Articles

AI Evaluation Costs Surge as Compute Bottleneck Emerges

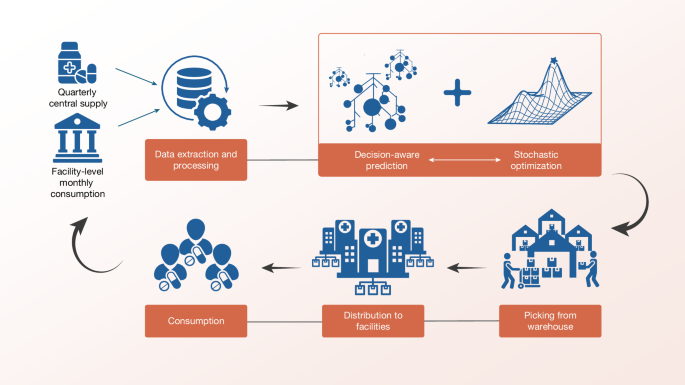

Sierra Leone Deploys Decision-Aware ML for Medicine Access

OpenAI Highlights Math as Pathway to AGI Progress

IBM Advances LLMs with Granite 4.1 Release