Meta and Microsoft Streamline Local AI with ONNX

Key Points

- 1New ONNX format enhances local AI model integration.

- 2AMD Ryzen AI improves processing efficiency and energy use.

- 3Promotes national AI autonomy by supporting local deployment.

Meta and Microsoft have developed the ONNX (Open Neural Network Exchange) format to facilitate the integration of AI models trained in the cloud into local IT environments. The ONNX format, which has been overseen by the Linux Foundation since 2019, allows cross-platform execution of AI models, easing the migration challenges posed by different frameworks like PyTorch and TensorFlow. With hardware optimizations in place, such as the utilization of AMD Ryzen AI processors and dedicated Neural Processing Units (NPUs), ONNX enables high-performance AI applications on local machines.

The strategic implications of these developments indicate a significant shift towards enhancing local AI capabilities without reliance on external cloud services. By enabling seamless migration and execution of cloud-trained models, organizations can foster greater data sovereignty and reduce dependency on foreign tech infrastructure. As a result, this initiative could lead to increased national autonomy in AI development, allowing businesses to operate more efficiently and innovatively in their own environments.

Free Daily Briefing

Top AI intelligence stories delivered each morning.

Related Articles

Unions Partner with Tech Giants Over AI Data Center Projects

Munify Raises $3 Million for Cross-Border Neobank Development

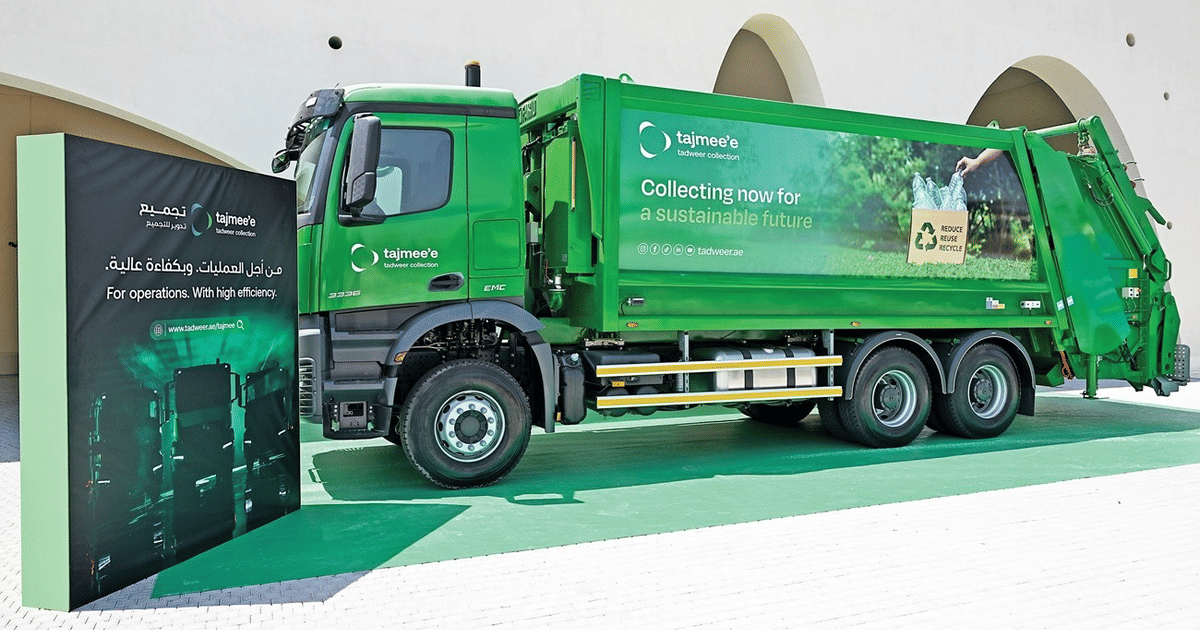

Abu Dhabi Deploys AI Fleet Cutting Emissions by 40%

UK Cybersecurity Agency Warns of AI-Driven Vulnerability Surge