Research Unveils New Method for Uncertainty Calibration in A

Key Takeaways

- 1New training method improves AI predictive accuracy.

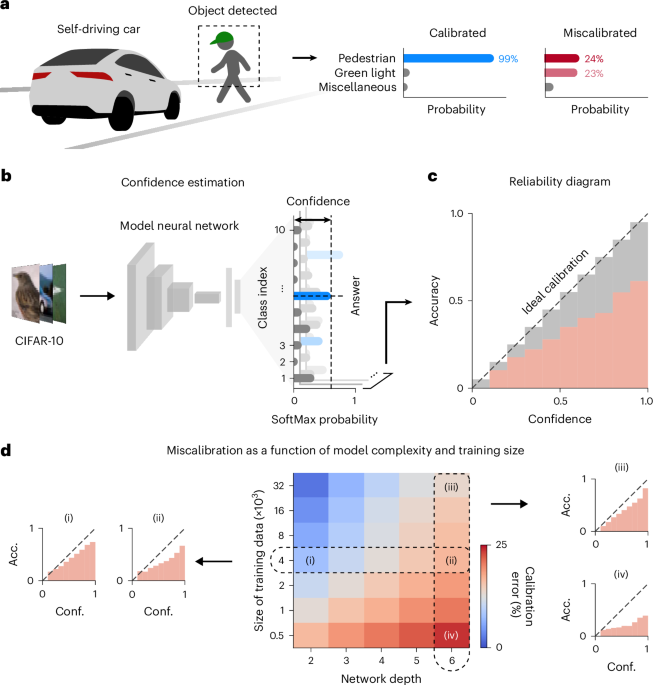

- 2Calibration approach addresses overconfidence in model outputs.

- 3Potential applications in real-world machine learning systems.

The paper presents a novel approach to uncertainty calibration in machine learning, crucial for ensuring predictive confidence aligns with actual accuracy. Current models often exhibit overconfidence and inaccuracies; this research identifies traditional initialization methods in deep learning as a major contributor to these issues. The proposed neurodevelopment-inspired warm-up training technique involves initially exposing networks to random noise and labels before using real data, yielding improved calibration.

The implications of this approach are significant for the deployment of machine learning systems across various applications. By enhancing the alignment of confidence with accuracy, this strategy ensures higher proficiency in handling both in-distribution and out-of-distribution inputs. This advancement represents a robust solution for overcoming uncertainty challenges, enhancing the reliability of AI technologies in critical settings.