Belief Graphs Enhance LLMs in Multi-Agent Reasoning

Key Takeaways

- 1New research shows belief graphs improve LLM performance

- 2Integration architecture crucial for LLM effectiveness

- 3Findings impact AI model development strategies

- 4New research shows belief graphs improve LLM performance • Integration architecture crucial for LLM effectiveness • Findings impact AI model development strategies

Recent research published on arXiv explores the role of explicit belief graphs in enhancing the performance of large language models (LLMs) during cooperative multi-agent reasoning tasks. In a series of over 3,000 controlled trials involving four different LLM families within the context of the cooperative card game Hanabi, key findings reveal that belief graphs can significantly aid weaker models when used appropriately. Specifically, while strong models benefit minimally from belief graphs as mere prompt context, transitioning these graphs to gate action selection yields essential structural advantages, particularly for performance in 2nd-order Theory of Mind tasks.

The implications of these findings are substantial for the development of AI architectures that prioritize multi-agent cooperation. The study highlights the importance of tailoring integration architectures to leverage the capabilities of belief graphs effectively. As AI technologies advance towards greater autonomy and collaborative functionalities, this research underscores the necessity for ongoing refinement in model training and integration strategies. Ultimately, the results could lead to advancements in how LLMs interact and reason collectively, thus paving the way for more sophisticated AI deployments.

Related Sovereign AI Articles

AI Evaluation Costs Surge as Compute Bottleneck Emerges

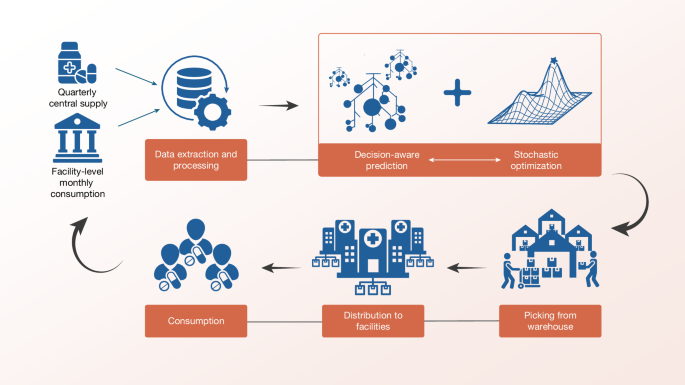

Sierra Leone Deploys Decision-Aware ML for Medicine Access

OpenAI Highlights Math as Pathway to AGI Progress

IBM Advances LLMs with Granite 4.1 Release