GGML Partners with HF to Enhance Local AI Infrastructure

Key Points

- 1GGML joins Hugging Face to support Local AI development.

- 2Focus on improving local inference capabilities with community resources.

- 3Enhances autonomy in AI, reducing dependency on centralized cloud systems.

GGML, the team behind Llama.cpp, is joining forces with Hugging Face (HF) to bolster the development and sustainability of Local AI. This partnership emphasizes maintaining the autonomy and leadership of the Llama.cpp project while leveraging HF’s resources for long-term growth. With Llama.cpp being pivotal for local inference capabilities, the collaboration aims to improve user experience and model deployment efficiency, enabling seamless integration with the transformers library.

The strategic significance of this alliance lies in the commitment to making open-source superintelligence accessible, which aligns with a broader trend of increasing local AI capabilities. By enhancing the infrastructure for local inference, this partnership seeks to mitigate reliance on cloud solutions, thereby fortifying national technology independence and fostering the development of AI solutions that are community-driven and sustainable the future of AI remains grounded in local autonomy and innovation.

Free Daily Briefing

Top AI intelligence stories delivered each morning.

Related Articles

Unions Partner with Tech Giants Over AI Data Center Projects

Munify Raises $3 Million for Cross-Border Neobank Development

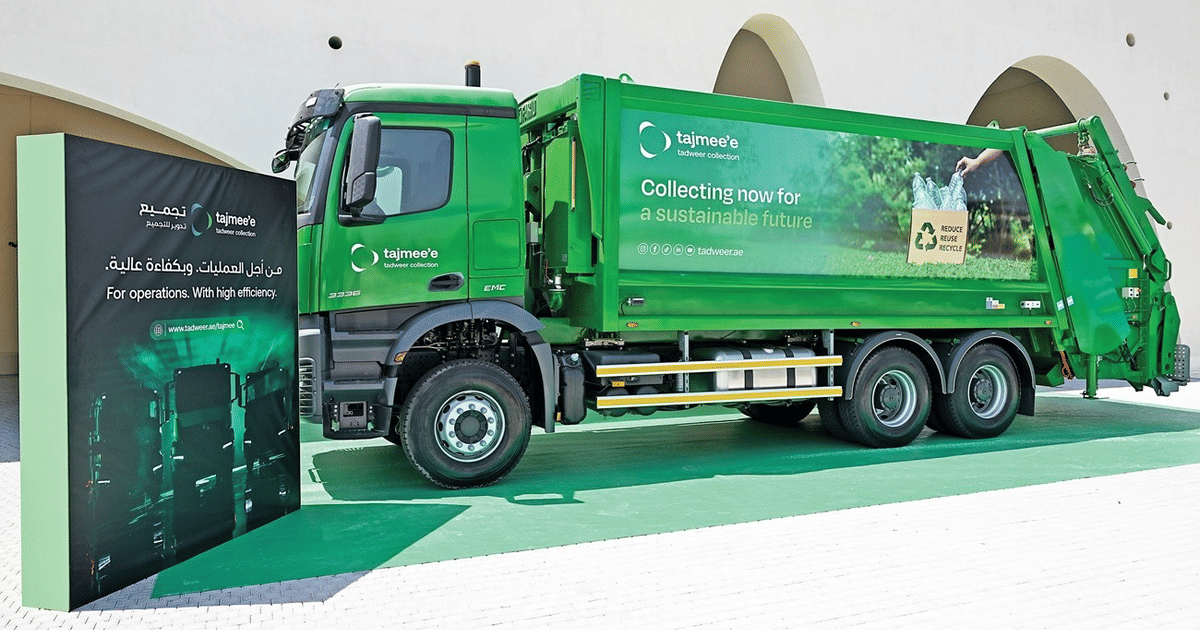

Abu Dhabi Deploys AI Fleet Cutting Emissions by 40%

UK Cybersecurity Agency Warns of AI-Driven Vulnerability Surge