Google Dominates AI Compute with TPU Chips

Key Takeaways

- 1Google now holds 25% of global AI compute capacity

- 2Shift towards TPU technology reduces dependency on Nvidia

- 3Increased control raises concerns over market influences

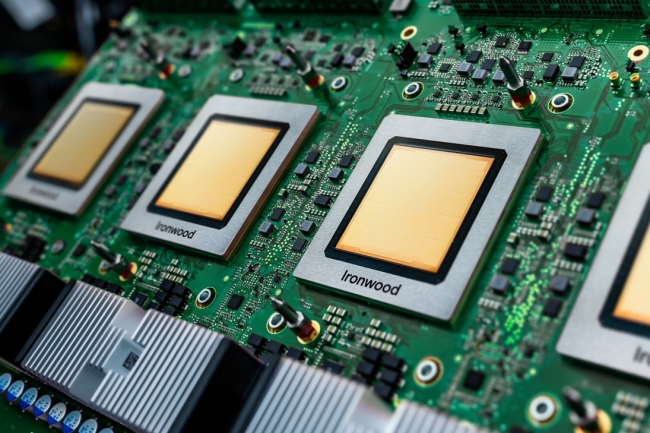

Google has solidified its position as the largest holder of dedicated AI compute power, primarily through its proprietary TPU (Tensor Processing Units) technology. According to a study from Epoch AI, more than 60% of global AI compute is controlled by hyperscalers, with Google alone accounting for approximately 25%. This significant market share enables Google to dictate the pace of AI development while maintaining less reliance on Nvidia compared to its competitors, who are heavily dependent on its GPU infrastructure.

The implications of Google's substantial AI compute capacity extend to market dynamics and national sovereignty over data and technology. With an estimated equivalent of 5 million H100 units, predominantly through custom TPU chips, Google showcases a viable path to greater AI self-sufficiency. This shift not only enhances Google's competitive edge but also raises concerns regarding pricing and access in a market increasingly dominated by a small number of players, thus potentially limiting diversity and innovation in the AI landscape.