New Approach for Debugging Large Language Models

Key Takeaways

- 1Systematic method for debugging large language models released.

- 2Enhances evaluation, interpretability, and error analysis practices.

- 3Aims to improve reliability and scalability of AI systems.

Large language models (LLMs) have become integral to various AI applications, yet debugging remains a significant challenge. This article presents a systematic approach for LLM debugging, treating them as observable systems and offering structured methods that span issue detection to model refinement. By integrating evaluation, interpretability, and error analysis, practitioners can effectively diagnose weaknesses, refine model parameters, and adapt data for fine-tuning, even in cases lacking standardized benchmarks.

Source

Related Sovereign AI Articles

AI Evaluation Costs Surge as Compute Bottleneck Emerges

Research29 Apr

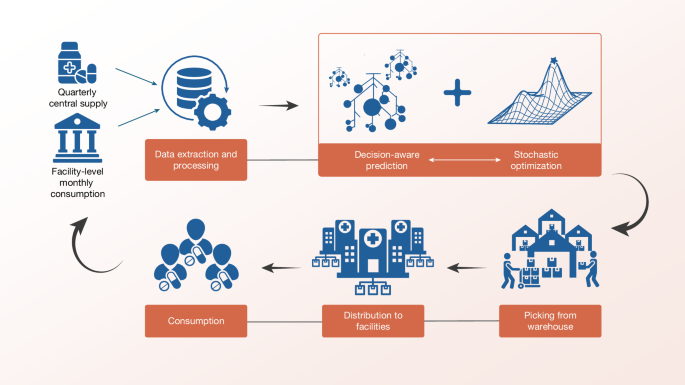

Sierra Leone Deploys Decision-Aware ML for Medicine Access

Research29 Apr

OpenAI Highlights Math as Pathway to AGI Progress

Research29 Apr

IBM Advances LLMs with Granite 4.1 Release

Research29 Apr

AI Chatbots' Warmth Reduces Trustworthiness and Accuracy

Research29 Apr